Predictive Modeling

Summary. A series of tests for predictive modeling purposes were developed to answer the question ‘were this exact same activity performed with a much larger group that had indetical test results, would the end scores be any different?’

This question is asked in order to determine if there is a chance that errors can be introduced due to the nature and arrangement of content within the statistical formula used to calculate Mann Whitney.

‘The standard way of verbalizing this test is ‘Ho’ vs. ‘H1’. The ‘Ho’ for this review is the null hypothesis, which states that MW results never change regardless of changes in population size. ‘H1’ states that there is a significant change in outcomes as total N increases, even when absolutely no change is found in the distribution of these responses.

One possible problem with this type of test is it assumed people are essentially clones of those who actually responded. In others words, the assumption is that people will not provide a scoree unmatched by any previous respondent, so a group that fails to provide low values in their responses, are assumed to not exist for these retrials of the original response pattern. Of course, we know this is not the case. Sooner or later someone is going to provide a low value as a score and thereby upset the status quo. But in general, with this approach, this person is considered an outlier, who response exists with the asumption that there is a normalized distribution of matching reponses made in the opposite direction, additional outliers countering the impacts of the original outlier’s response.

The next thing to note about the assumptions attached to this methodology is that it is assumed that once the original base data is in place (i.e. at a value of n), that the normalized distribution assumption suggests a doubling of the original n value is needed to begin to demonstrate significant impact on the original response results. In other words, the assumption being made here at the classroom level is that the students who provided the original results are themselves considered to be status quo and not at all unusual. Therefore, assuming the next selection fo students is the same, significant effects have to be produced by that additional group to reverse the results that were initially obtained. In the reporting world for QIAs and PIPs, this assumption and series of events related to the “Regression to the Means” effect. Regression to the means states that if the original group selected was in fact different in some way, enough to provide questionable and poorly representative results, that an addition of more people will bring the actual final set of results back to where it should be, at a value approximating the true mean for all outcomes.

In the teaching world, we rarely have outlier problems occurring at the classroom level in programs that continue for a few weeks to months. Of course, unique classes do form in which the students are all nurses on time, and all pharmacists another time, but in the end these demographic differences appear to have limited impact on scoring behaviors and habits. In the end, regression to the means can occur, and is perhaps what is being represented by this method of repeated measures in which n is increased in equal increments from one program to the next.

These observations meant that this form of predictive modeling for small group results can be considered reliable and probably should be performed for all evaluations made of small programs in order to see if typs 1 and especially 2 error are likely to occur. In the least, this is simply one more step that can be taken to better quantify your outcomes. For further testing of this application of methodology, it is even recommeded that such applications be tested on program known to recur for longer periods, with equal class sizes expected in the months to come. It is assumed that these classes will more likely have different averages in their results, and so are not a perfect test of the applications of this new methodology. But this additional step may help provide additional insight into this predictive modeling process defined as reliable and valid for the time being.

Methodology. Predictive Modeling is the process by which we begin with a series of observations and use these observations to predict later outcomes, assuming future performance activity will be fairly identical in nature to the most recent behaviors documented as part of a given activity or traditionally associated with that activity as part of the status quo. As an example, we might observe the smoking cessation behavior of 100 individuals engaging in a hot line service program, evaluate their performance outcomes, and use this to make conclusions about the human population as a whole concerning the given measurement outcomes.

The basic assumption taken into account here is that the study population as a whole and its outcomes can be correlated with other populations formed within the same system or population of people. Internal validity is when the outcomes of a sample are considered valid and relatable as a whole to the remaining members of the same population sampled or recruited as part of this research activity. External validity is when we try to claim that the same study has relationships to people as a whole, or to a much larger population, such as the assumption that Medicaid people in one part of the country demonstrating a certain response to a certain intervention imply that engaging in the same intervention process elsewhere in the country should also have similar outcomes. Predictive modeling is used to make predictions of outcomes when a particular set of study activities are carried out in much larger populations. It uses the outcomes of one small area to predict how many people will demonstrate similar outcomes and effects in other larger population settings.

The accepted problem with this type of evaluation process is that Type 1 and Type 2 errors can result. We may assume that there will be a similar result in another population at another far away place, and be wrong; or we could assume that the practices engaged in for one location, since they were unsuccessful, will also not work elsewhere.

What about when we take a very small sample? What factors come into play with regard to Type 1 and Type 2 error? If you had an exceptionally small sample to be used, what is the best way to draw conclusions for much larger populations with these outcomes?

It ends up there is one additional factor that can create havoc with outcomes when exceptionally small population sizes are used–a formula-based production of error. It ends up, that many formulas a n-specific. When n is too small (especially if n = 0), the equation become unreliable. The only way to avoid n=0 problems is either to avoid designing measurements that could end up with a value = 0 that comes into the equation, or make use of other methods of calculating the outcomes, different measures with different counts. Another way to interpret n = 0, even with small numbers (i.e. N<25 or N<12), is to simple take a simplistic logistic approach and state that n=0, but we cannot assign any confidence in the reliability this final outcome.

We can overcome the small numbers problem by artificially modifying the outcomes. This modification made is an assumed part of the research and evaluation process, and is stated upfront as being an optional pathway taken to evaluation, in order to add simply one more assumption to the research groups employed in this research activity.

If we assume that the population is properly produced or sampled, and that it represents a fairly reliable representation of the overall population, and stating upfront that we know this could well be a wrong assumption, we can then go on to the next step and ask: ‘what if a much larger population with identical outcomes distributions and averages, were tested in the same way, thus eliminating the problem with low counts?’

For some reason, this method is typically never employed, but it is the best more accurate method to evaluate a population’s findings using the standard non-parametric measurement techniques already traditionally employed.

It ends up that a population of small n, with averages x1, x2 through xn, can have unreliable and statistically insignificant results whereas an identical population, consisting of twice as many individuals in each group with identical averages, could indeed have statistically significant results.

The two populations are identical in every way, except for size, and yet they have two opposing response patterns for a QA study. What do we do about this?

A more reliable way to interpret this population’s outcomes is to ask the additional question, ‘assuming these outcomes are representative of our total population, or at close to those of the larger population, and if we then carry out the analysis of our results with this new population’s larger numbers, will we have more representative, truer statistical indicator results?

The answer to this last question is ‘yes.’

If we duplicate the entire population, and re-run the analysis, we get more representative results that now are much more reliable indicators.

By changing the size of the population, you force the equation to be carried out with a modified take on the regression to the means or “approaching the true means” philosophy. It is already accepted that larger populations will result in truer and truer averages over time. These averages will increase and decrease, seemingly in some randomized fashion, but over time this average will approach a final value, a particular value that truly represents the overall population as a whole. The great the percentage of the population taken and sampled, the truer the results.

With chi squared statistics, the low n problem can be acknowledge as being present, with mention as well of the assumption that the populations values represent values close to the mean, and will thus be used to form a larger population that in turn will be tested in an identical fashion in order to eliminate the low n problem produced with Chi Squared equations. To do this, you simply double, triple, quadruple, pentuple, etc. the exact population values, and each time rerun the standard chi squared analysis with the much larger groups. Typically, by the time the 5x population group is tested under similar condition, the end result will have approximated its final value. In rare cases, more than five tests are needed to produce a curve that is nearly tangential with the x-axis or final x-outcome, thus representing the total ending Chi squared value for a larger population, had a larger population of similar results and types been tested.

This slight modification in methodology is not at all different from other types of assumptions made in developing your research methods. The decision to choose sample size x or 2x for example is given as being a potential cause for producing errors in final outcomes. Certainly 2x is more representative that 1x, and it would be helpful to be able to deduce what degree of difference in outcomes might have been produced if you used one half the final population for an initial study, for later comparison.

It ends up there are several more factors influence responses and outcomes. This is why when population sizes are increased, we experience the regression towards the means with larger and larger population reviews. The initial numbers entered into a dataset impact later outcomes. To overcome this impact, we generally have to double the amount of entries to eliminate this effect. Doubling population sizes to unreasonable counts can make a project no longer do-able or manageable.

This is exactly why national populations studies usually makes use of several hundred or a couple fo thousand people at most. We can take the results of a study of 512 people + 15% error assumption in sampling, or 600 people approximately, and double that to 1200 before the results of the larger population study can overcome the impacts of the outcomes generated by the first 600 participants.

It ends up that this rule is heavily used to review Medicaid and Medicare programs in large population settings. Between 1250 and 1550 is the standard range used for most studies of exceptionally large populations (i.e. Medicaid/Medicare program and patient satisfaction reviews). This is because it would be too costly to carry out the same study of a significantly larger population of 2500 individuals, or 5000, or 10,000, the changes in sample sizes one would have to make before becoming capable of modifying the smaller group outcome in a statistically significant fashion.

Not only is cost a limiting factor in this decision-making process, but also impact of the larger number on the original outcome. People are people. populations are populations, one population if well-defined, will not vary substantially from results of x1 and y1, when a similar study is carried out on an identical population of vastly different people producing outcomes of x1′ and y1′. There are differences, but it is the relationship between x and y that is more important than x1 and x1′. So, the larger population, although it adds more accuracy and truth to the outcome, doesn’t change the credibility, reliability or validity of the study and its results. Your values are different, not their meaning or relationship once the n reached is sufficient.

This does not seem possible, but time and time again, testing and retesting this hypothesis, the datasets provided have demonstrated that regression to the means (or truest outcome) occurs with chi squared analysis, if the same calculations are made on the same population (only multiplied by various n’s), to produce identical averages and overall findings, but truer and truer representations of chi and the relationship that it implies between the two numbers groups.

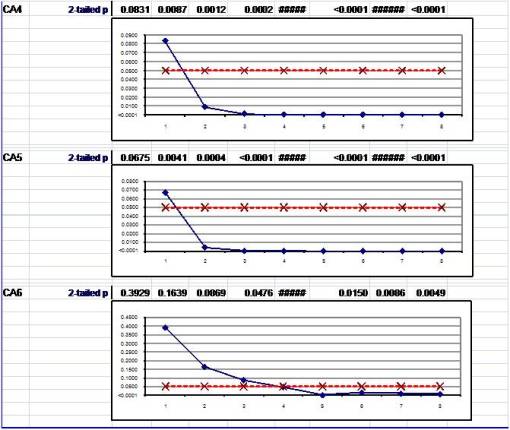

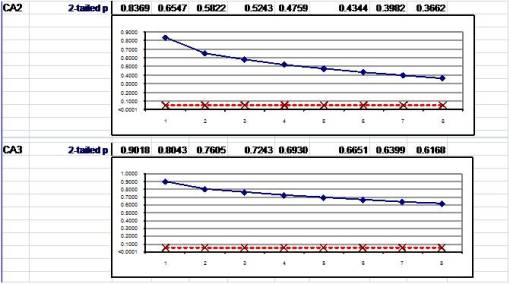

The following graphs represent these findings, in real world studies. What this methodology provides us with is the opportunity to avoid the error introduced by just taking the final result of a study with small n’s and reporting the results. These results are very likely to be misrepresentative of true and predictable outcomes. Reporting them as significant or not would be a researcher’s methodology error.

To eliminate this chance for researcher’s error messing up a report, it is recommended that multiple chi squared techniques be used with multiples of a given true population’s outcomes. This gets rid of the formula-induced errors that intervene with such reports, and makes the report more applicable and slightly more valid as a public health/social epidemiological research instrument.

Figure 1. It is important to note, that an exact duplication of the data sets was developed for each increase in n, so the histograms of results undergo no change, and the averages remain equal to hose of the first group tested. the y =-axis values change but the appearance of results in histogram form do not. In theory, when results are so few that not all of the possible responses were used, such as responses 1,3 and 6 in the Pre for CA1, this absence of responses is maintained in the larger datasets. A second variation on this methodology allows for random inclusion of the missing responses, without producing a major change in averages, etc. This method results in a cancellation of effects however on both sides of each of the responses accumulated and presented for the first trial. in essence, each response made be considered a separate mode for a subset of the response group.

Figures 2 and 3. The followign demonstrates an outcome of hypothetically testing the responses with the assumption that there were much larger response rates. In almost all cases, only one of four outcomes can be acheived when this test in performed. The first outcomes is that if a result was already staistically significant, this conclusion does not change.

The second classical form of response pattern is the development of a statistically significant result from a trial 1 result that was not significant in that is was placed well above the critical p required for statisticial significance to exist. This outcome demonstrates that the p response recorded after trial 1 is mostly the result of a type 2 error–statistical significance did exist in the response patterns, but the numbers were too low to demonstrate this–a limitation forced upon us by the equation format.

The three subsequent questions in the figures below also demonstrated this behavior with the outcomes. This type of outcome has been repeated hundred of times now with the testing of this prediction model, using various program results to carry out this analysis of the methodology. In most cases, the critical values of n required for significance to be demonstrated with a reliable n is 2 or 3 replications of the original n dataset (3n-4n). The production of a significant outcome at 5n at best is considered borderline.

.

Figure 4. The following represents two response patterns in which the original Mann-Whitney outcomes (>.8) were pretty much identical in value to previously tested failures to reach significance, failed to demonstrate stitistical significance as n was incrementally increased. Notive that even after 7 or 8 retests of the new dataset, significance was never reached.

.

.

An additional form of response not displayed here is the temporary spiking of MW results for trial 2. This recurred several times in the several hundred tests of application for this methodology, but was immediately corrected for or reversed with trial 3. The exact reason for this “blip” in the results remains uncertain, and it has never impacted final outcomes regarding values that tend towards a level above or below the critical p value.